AI researchers often discuss the "control problem" when considering the risks AI poses to human civilization. This is the possibility that a super-intelligent being could emerge that is so much smarter than humans that we would soon be unable to control it. Sentient artificial intelligence with superhuman intelligence has been feared as a potential threat to humanity with its pursuit of goals and interests that are in conflict with ours. Even though this is a legitimate concern, is it really the greatest threat AI poses to society? It is believed that human-level machine intelligence is at least 30 years away, according to a recent survey of more than 700 AI experts.

However, a different kind of control problem, which is already within their grasp, could pose a serious threat to society if policymakers do not act quickly to deal with it. In the future, there is a possibility that current artificial intelligence technologies could be used to target and manipulate individuals in an extremely precise and efficient manner. The new form of personalized manipulation at scale could also be used to influence broad populations at large by corporations, government agencies, or even rogue despots who may be influenced by this form of personalized manipulation.

In a contrast to the traditional Control Problem described above, this emerging AI threat is referred to by some as the "Manipulation Problem." In the last 18 months, they have transformed it from a theoretical long-term threat to an urgent near-term threat, which is a threat they've been tracking for almost two decades. The reason for this is that conversational AI has proven to be the most efficient and effective deployment mechanism for AI-driven human manipulation due to its efficiency and effectiveness. Furthermore, during the last year, an incredible AI technology called Large Language Models (LLMs) has rapidly evolved into a highly mature technology with a high degree of accuracy. As a consequence of this, natural conversational interactions between targeted users and AI-driven software have suddenly become a viable means for persuading, coercing, or manipulating users.

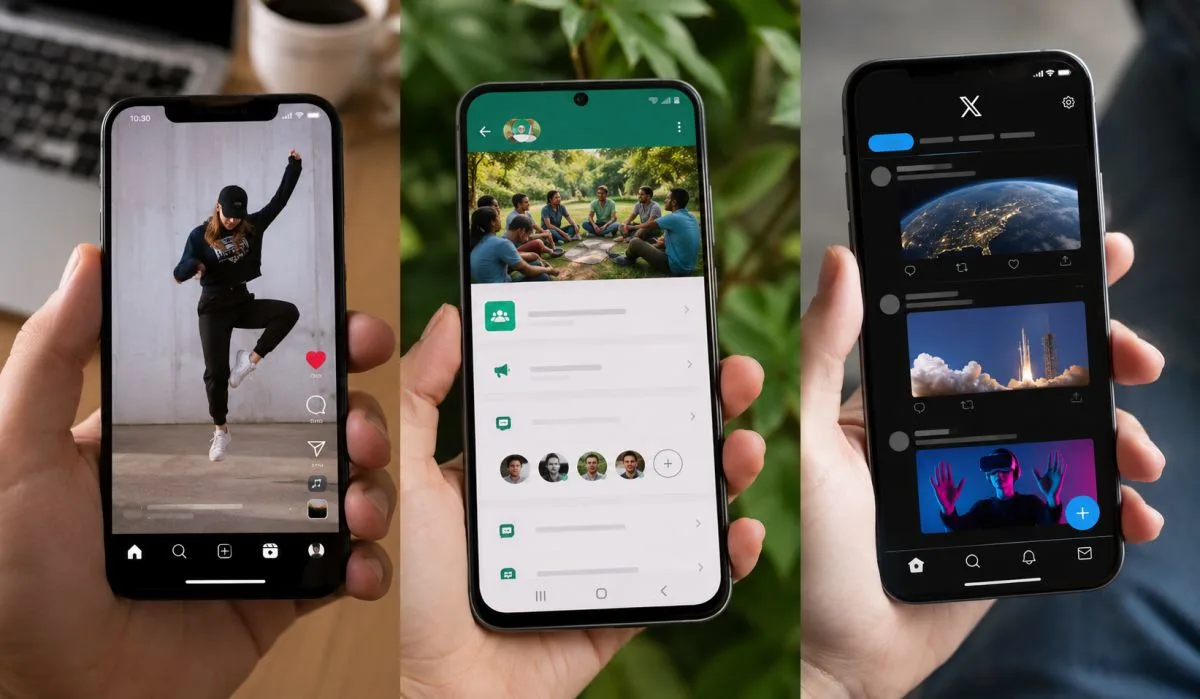

There is no doubt that AI technology is already being used to drive influence campaigns on social media platforms, but this is still primitive compared to what the technology will be able to achieve in the future. In reality, current campaigns are more analogous to spraying buckshot at flocks of birds, even though they are described as "targeted." There is a technique in which propaganda or misinformation is directed at a general population in a bid to penetrate the community, resonate with its members, and spread across social media networks through a few pieces of influence. In my opinion, this method of political manipulation is extremely dangerous and has led to real social damage, such as the polarization of communities, the spreading of falsehoods, and the loss of trust in legitimate governments. In comparison, however, it will seem slow and inefficient when compared to the next generation of AI-driven influence methods that will be unleashed on society in the near future.

This refers to real-time AI systems that engage targeted users in conversational interactions and skillfully pursue influence goals. In order to market these systems, euphemistic terms will be used such as Conversational Advertising, Interactive Marketing, Virtual Spokespersons, Digital Humans, or just AI Chatbots. However, regardless of what we call these systems, there are terrifying vectors for misuse and abuse that can be exploited through them. This isn't about the obvious danger of unsuspecting consumers putting their trust in chatbots whose output has been tainted with errors and biases because their data have been polluted with errors. The agenda-driven conversational AI systems that persuade users through convincing interactive dialog are far from innocent. We are speaking of something far more nefarious — the deliberate manipulation of individuals through the deployment of targeted agenda-driven conversational AI systems that manipulate them.

By utilizing these new artificial intelligence methods, rather than firing buckshot at large populations, the new systems will be able to act in a more “heat-seeking missile” way by identifying users as individual targets and then adjusting their conversational tactics accordingly, adjusting to each individual’s personal preferences as they strive to maximize the persuasive impact of their messages in real-time. The key to these strategies is the relatively new technology of LLMs, which can produce real-time, interactive human dialogue while also keeping track of conversational flow and context. These artificial intelligence systems have become popular since the launch of ChatGPT in 2022, and are trained on such a large set of datasets that they are not only capable of mimicking human language, but they are also able to make impressive logical inferences and can provide the illusion of human-like common sense, as well as a vast repository of factual information. Such technologies will enable natural spoken interactions between humans and machines that are highly convincing, apparently rational, and surprisingly authoritative when combined with real-time voice generation.

The voice interaction will not be with disembodied voices, but with visually realistic personas generated by AI. Digital humans are a second rapidly advancing technology contributing to the AI Manipulation Problem. It involves the deployment of photorealistic simulated people that look, sound, movement, and makes expressions as closely as possible to real people. Video conferencing and other flat layouts can be used to deploy these simulations as interactive spokespeople for consumers. With the help of mixed reality (MR) eyewear, they can also be deployed in immersive three-dimensional worlds. The generation of photorealistic digital humans in real-time initially seemed out of reach just a few years ago, but rapid advances in computing power, graphics engines, and AI modeling techniques have made it possible in the near future. This capability is already available through tools from major software vendors.

For instance, Unreal recently launched Metahuman Creator, which is an easy-to-use tool. It is designed specifically for the creation of convincing digital humans that are animated in real-time in order to engage consumers in an interactive manner. Similar tools are being developed by other vendors as well. Combining digital humans and LLMs will lead to a world where we regularly interact with Virtual Spokespeople (VSPs) that appear, sound, and behave as authentic individuals. According to a study conducted by Lancaster University and U.C. Berkeley in 2022, users are no longer able to distinguish between authentic human faces and those produced by artificial intelligence. Further, they found that users perceived AI-generated faces as "more trustworthy" than human faces. As a result, it appears that there will be two very dangerous trends in the near future. In the near future, AI-driven systems will be disguised as humans, and we won't be able to tell the difference between them. Furthermore, disguised AI-driven systems are more likely to be trusted than human representatives.

It is extremely dangerous for us to be engaged in personalized conversations with artificial intelligence-driven spokespeople in the near future, as they are (a) virtually indistinguishable from real people, (b) inspire greater trust than real people, and (c) may be used by corporations or state actors to achieve a specific conversational objective, such as persuading people to buy a particular product or to believe a particular falsehood. Aside from analyzing emotions in real-time using webcam feeds, these AI-driven systems can also be used to infer emotional reactions through facial expressions, eye movements, and pupil dilation if not tightly regulated. As part of the process, these AI systems are also expected to process vocal inflections, which will allow them to infer changing feelings throughout a conversation. In this way, a virtual spokesperson will be able to adjust its tactics based on how people respond to every word it speaks, allowing the spokesperson to determine which influence strategies are effective and which are not. There is great potential for predatory manipulation through conversational artificial intelligence.

People have pushed back on the concerns about Conversational AI over the years, telling us that humans do the same thing by reading emotions and adjusting tactics, so it should not be considered a new threat. For a variety of reasons, this statement is incorrect. In the first instance, these AI systems will be able to detect reactions that cannot be detected by a human salesperson. For example, AI systems are capable of detecting not only facial expressions, but also "microexpressions" that are too rapid or too subtle for a human observer to detect, yet indicate emotional reactions - including those that the user is unaware of expressing or even feeling. AI systems also have the ability to recognize subtle changes in complexion known as "blood flow patterns" on faces that indicate emotional changes that a human would not be able to detect. As a final note, AI systems are capable of tracking subtle changes in pupil size and eye movements and extracting cues about engagement, excitement, and other internal feelings. A conversation with a conversational AI may be more perceptive and intrusive than a conversation with a human agent unless it is protected by regulation.

AI that engages in conversation also has a greater chance of crafting an effective verbal pitch. It is important to note that these systems are likely to be deployed by large online platforms that maintain extensive data profiles on a person's interests, views, background, and other information over time. When interacting with a Conversational AI system that acts, sounds, and looks like a human representative, people are interacting with a platform that knows them better than any human could. Furthermore, it will compile a database of how they responded during prior conversations, identifying what persuasive tactics worked and which did not. Thus, Conversational AI systems adapt not just to immediate emotional reactions, but also to behavior over time. In their practice, they can learn to draw you into a conversation, convince you to accept new ideas, push your buttons so you get riled up, and ultimately drive you to buy products you don't need. They can also lead you to believe misinformation that you would not normally recognize as false. The situation is extremely dangerous.

In the world of promotion, propaganda, or persuasion, conversational AI may pose a greater interactive threat than anything we have seen in the use of traditional or social media to promote, propagandize, or persuade. Thus, we encourage regulators to focus on this issue as soon as possible in order to prevent the deployment of potentially dangerous systems. In this case, the goal is not just to spread dangerous content, but to enable personalized human manipulation on a large scale. In order to protect our cognitive liberty against this threat, we must have legal protections in place. Artificial intelligence systems are already capable of beating the world's best chess and poker players. The average person has little chance of resisting the influence of a conversational influence campaign that has access to their personal history, processes their emotions in real-time, and adjusts its tactics based on artificial intelligence.

By Rashmi Goel